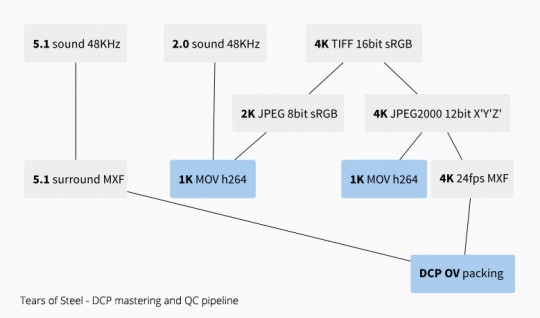

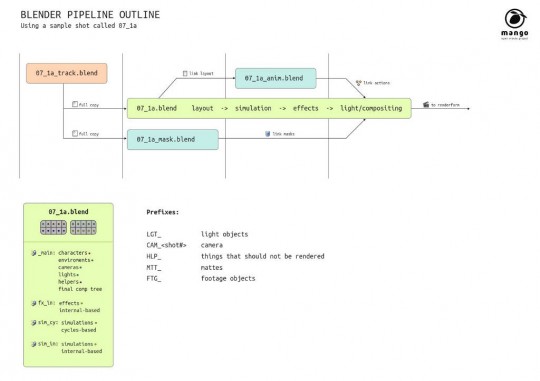

This is a report on how we realized the 4K DCP of Tears of Steel for theater distribution. Our tools of choice where:

- Blender (generation of the 16bit TIFF frames)

- OpenDCP (conversion of the frames to JPEG2000 and wrapping of picture and sound)

- EasyDCP Player (checking integrity and specs of the DCP)

In this simplified process of DCP mastering we will go through few linear steps, such as:

- Gathering raw images and sound in a DCI (Digital Cinema Initiative) compliant format

- Wrapping them separately into mxf containers

- Indexing and inserting them in the Digital Cinema Package

- Quality control

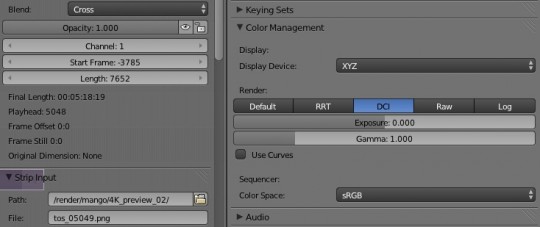

First off we need to generate an image sequence of the whole short film, using an appropriate image format, such as TIFF 16bit sRGB. In our case we are talking about 17616 frames, taking 850GB of space. The 4K DCI compliant resolution for our aspect ratio is 4096x1716px.

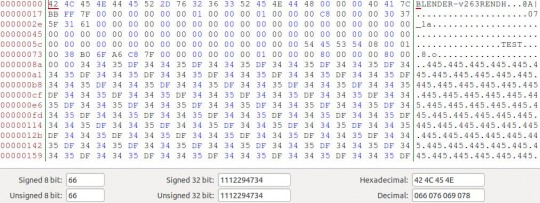

Once the export has succeeded, we can convert that image sequence into a JPEG2000 12bit XYZ sequence (with a .j2c extension). In order to do this operation we use OpenDCP, a great Free tool that offers both a GUI and a CLI. Once converted, the image sequence for the short film will become around 12GB.

In order to speed up the process and skip the 16bit TIFF generation, we tried to export the edit in JPEG2000 XYZ directly from Blender (with the benefit of using a render farm) but the image format was not accepted by OpenDCP for the mxf wrapping. Hopefully this will be fixed in the future as it saves quite some time and storage space. Continue