Hello everyone,

Apparently you can’t do a computer graphics without using computers. But which exactly computers and how much of them we’re using?

Couple of different hardware configurations are used in the studio by artists and by renderfarm and this short post is devoted to describe which exactly hardware configurations we’re using for Mango project. As an addition there’re benchmark result of CPU and GPU rendering on that systems provided at the end of this post.

Studio Server

Studio server is a fancy dual-Xeon system with 12 Gb of RAM. Both processors are quad-core with hyper-threading support running on 2.27Ghz. Own hard disk space is only 146Gb SCSI disk (which means it’s superfast), but there’s external hardware disk array attached to this server. In fact, there’re are two raid arrays there: one of them is only 1Tb which is used for day-to-day shared folder and production svn, and another one is 5Tb array used for rendered data. I’m not sure about raid levels used, but think it’s raid-1 (mirror) used to smaller array and raid-5 used for bigger array.

It’s a headless server so there’s no fancy video card in this computer.

Render Nodes

Currently we don’t have justacluster running (probably it’ll be configured this week), so there’s only 4 Dell nodes which are used by render farm. Each Dell node is a dual-Xeon station and it’s also quad-core processors with hyper-threading support and they’re also running on 2.27Ghz. They’ve got 24Gb of RAM and 160Gb space on a SCSI disk.

This is also headless systems.

Systems used by artists

- Kjartan is using new system delivered to us from HP which is a single-Xeon station, quad-core without hyper-threading running on 3.5Ghz. It’ve got 8Gb RAM and 1Tb haddrive space. This machine has got Quadro 2000 video card.

- Francesco is also using HP station with the same processor, memory and hard drive, but video card was replaced with 8800 GTS.

- Nicolo is using station based on Core i7 920 processor which is quad core with hyper-threading support running on 2.67Ghz. This computer has got 12Gb RAM and 500Gb hard drive. This computer has got two videocards one of them is 8400GS and another oen is Quadro 2000 taken from Francesco’s computer.

- Ian and Sebastian are using identical systems with dual-Xeon configuration. This processors are quad-core with hyper-threading support and they’re running at 2.66Ghz. There’s 24Gb of RAM and approximately 380Gb hard drive. Video card is GTX260.

- Jeremy is using quite the same computer as Nicolo with core i7 processor but here there’s another video card installed — it is GT220.

Network

We’ve got two separated networks here. One of them is used by computers in the studio and another one is used by render farm. In both cases it is gigabit network and separation is needed to reduce network latency caused by network activity of render farm and artists. To be more fancy studio computers are attached to gigabit switch which is connected to server with 10GBit connection.

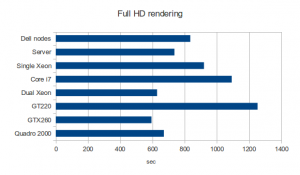

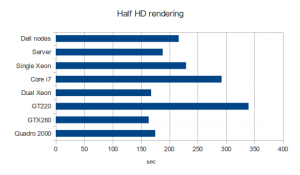

Benchmarks

Benchmark was done using BMW model from Mike Pan which you can find in this blenderartist thread. There were 4 setups: FullHD (1920×1080), HalfHD (960×540) with CPU and GPU rendering. There’s a time taken for rendering by different systems (time in brackets is time of HalfHD rendering).

- Dell nodes: 13:54.73 (3:36.34)

- Server: 12:16.38 (3:08.18)

- Single Xeon stations used by Kjartan and Francesco: 15:19.74 (3:49.34)

- Core i7 stattions: 18:12.02 (4:51.83)

- Dual Xeon stattions used by Sebastian and Ian: 10:30.83 (2:47.79)

- GT220: 20:52.60 (5:39.25)

- GTX260: 9:53.98 (2.43.56)

- Quadro 2000: 11:10.93 (2:55.09)

-sergey-

So, wouldn’t it be great to have a nice GPU render farm? :)

I have a dream :

http://www.carri.fr/html/config_complete/config.php?id=130106

…

Nothing other to say : probbly 1000 path a second :)

What are all those numbers before that strange “E” with 2 horizontal lines symbol ]:)

look at this page: http://www.nvidia.it/object/turnkey-gpu-clusters-it.html 42 TFLOPS at 129’000 euros, instead of 30 at 155K euros

I’m sorry… the prices are pretty the same, except the taxes…

Unfortunately, not. GPU rendering is much faster rendering sample scene like this but we can not render actual scenes on this cards because of memory issues. And it’s a big challenge to make GPU rendering fine when not all data fits video card memory and overhead caused by sending data from RAM to video card might make GPU rendering much slower.

Probably using cards like Tesla will help here, but it’s a bit more expensive than dual-cpu configuration with new fancy LGA2011 processors which seems to be really promising.

We don’t have such a hardware here, maybe somebody can test how that guys are working and share result with us? :)

Oh, sorry for my post … I was absolutly not constructive (too much number before the e^^, gonzalo)but I thought you can found more cheaper solution than this. On nvidea site they was a link for a home serveur with 2 xeon + 2 tesla GPU + 16 GO RAM + motherboard + screen + case for about 16000 e.

I just thought that fermi interface which allow hight memory per cuda core would solve the problem.

Fermi also allows better memory menagement in cuda threads than other cuda architecture. Since cuda 4.0 expand cuda possibilities to all that can be done in C++ (espicially classes and object oriented programation), I think that if brecht developp some tiled bvh to dispatch the tasks to cuda core, it would work well.

I think they ever use this in octane or in the new nvidia renderer.

The tesla cards with ECC, impressive big cache (up to 16 Go for a single card) and hight speed bus can solve all the problem you got.

So do you guys thinks u can ask nvidia for a gold sponsorship ?

If so you have to start a global conversion to make a full cuda and/or opencl blender (thats a jalk, but if at least particules, smoke and fluid can benefits from that, it would help you a lot).

Keep on going !

Ps : for me it sure that fermi based cards that handel giga per second for numerical simulations wouldn’t have any problem to tackle with the footage even if 4k.

The question is: will this fancy computer from nvidia be 20x faster than one our node?

We don’t want to reach maximum speed of a single node, we want the whole animation rendering be as fast as possible with affordable costs. I mean for sure our single node is not as uch fancy, but there are 4 dell nodes and 16 nodes in justacluster and they all together are a bit cheeper than one dual-xeon dual-tesla system you’re mentioning and i’m not sure it’ll render animation at least in the same time as our current farm.

Will the JustaCluster undergo some changes?

I want! How many times more processing power is that than the Elephants Dream team had then? :D

If estimating using moores law, the processing power would be 12-13 times faster…

It looks like these hardware configurations are good enough for BI (CPU compute), but a bit tight for Cycles (GPU compute)? Do you plan evolution if Cycle is finaly chosen ?

As i mentioned to Lars, memory is the biggest problem. it’s easy to have lots of memory on motherboard but not on GPU. And this is a problem for real scenes (even for test scenes for real shots :)

Couldnt you just compound renders between various GPU’s and then composite?

Not actually sure i got you correct, but rendering even small peace of frame might requite the whole scene database loaded into video card. For example when you’re using reflections.

Of course… I forgot that “little” limitation… ;)

mmh… so this casts new light to the direction Cycles will go over next months, if you really want to render mango using cycles it’s time to leave behind the immense effort and a lot of time needed to assure 1:1 gpu compatibility with what can be achieved with a pure cpu approach, am I far from truth?

if you dont have any ATI Cards how do you plan on solve Cicles issues around it?… :( got a new mac, …it’s got ati…

We are in direct contact with AMD about the ATI opencl progress. The ball is really on their side first… next to that, Apple also makes their own OpenCL.

Iḿ working in a i7 workstation, 12Gb of ram, with a 275 nvidia card. Next week the nvidia card is to be upgraded to a Quadro 2000. Reading this blog is not clear to me if really this upgrade benefits me a lot for 400 bucks! Instead I think the Quadro gives a better stability and better OpenGL support. Whatś your advice about Quadro and Blender? Does the price tag justifies the upgrade? Thanks for all your excelent work!

What OS and video driver use on your machines?

Where do the network cables come together and how do they run through the Institute? And will all servers/switches/render nodes be in one room this time?

All studio computer cables (1 Gbit) go to a switch with 10 Gbit port + 10 Gbit cable to server with 10 Gbit network card. Farm switch has 4×1 Gbit cables to this server. Quite good :)

There’s 3-4 switches in the studio now, with a number of routers, I lost precise insight in the exact workings.

What kind of 10 Gbit connection? SFP?

And is the switch seen on the picture posted by Sergey the farm switch?

Eeh.. Can’t actually answer what exactly it is at this moment. It was set up before i’ve arrived.

Switch form the photo isused to connect 4 dell nodes to a server only. Studio switch and some extra internet modem are in different places.

Hi guys,

Sounds like you are progressing well with the hardware. Can I ask why you have chosen Quadro 2000 cards? – You only have 192 cores on these devices and they probably cost a fortune? Would a GTX 460 not have been better? Even my SE version has 228 cores and was really cheap! – Cycles is really quick on this card and even quicker on the full fat 460GTX (Not SE). – Surely realtime previews would be smoother on faster & cheaper cards?

Also

How is the render farm configured with respect to software? Are you using Blenders internal network render or are you developing something new/using open source render queue manager?

Will the render farm configuration be included on the DVD set like previous releases?

Can’t wait to see the final movie, should be epic.

Keep up the good work on this massive task!!!!

We didn’t actually choose anything :) This systems were provided us by HP.

About renderfarm.. basically we’re using the same system as for Sintel, with some minor tweaks. Not sure, but think it’ll be included on DVD.

Were you using CUDA or OpenCL for the benchmark on the GT220? Because on my computer which has the GT240 none of them works (Cuda needs a newer shader model version and OpenCL says in the console that the instructions “cvt” and “mov” require SM 1.3 or higher or amap_f64_to_f32 directive)

What model and size of displays do you use?

2 x Eizo FlexScan S2433WFS-GY 24″ ?

Hello guys,

gr8 work….good ispiration all round……..

12.3 ccc is out for ati cards is out there seems to progress in their 12.4 opencl driver

All ur scenes require high amounts of gpu memory maybe saphire 7970 6gb would be a solution………..

Along the lines of AMD/ATI hows comes non of ure hardware is amd…….as amd has 8cores (soon to be 10) as well as 16 core opterons…….ps would be cheaper to build pcs along these lines

By ure selves. …..ps ud have more money for beefier gpus like quadro 6000 or even tesla/or even quad sli 580……..

Of this may be a debate to whether u use real world consumer desktops or industry style standard desktops……

Other than this gr8 work u are inspiring me to be ‘ id love to be a programmer ‘

my pc build is phenom 6 1055t 16gb ram 500gb laptop hard drive and standard harddrive

with ati6950 2gb if i knew cycles was coming out at the time of build i would have brought nvidia 560ti 2gb……was using ubuntu at one point but openclpy broke my system so i went back to windows 7 the build cost 900 -1200 including legit windows 7 and 21″ hd monitor

Or even quadfire 7970 when amd fix up opencl according to tomshardware these cards perform really well in gpucompute

Also if possible when someone finds a way to share render data over all availblre gpus the 6gb versions of these would awsome for cycles

ps with amd newly releaaed 12.4 opencl drivers the kernal is to be compiled everytime the user hits the render buttons/rendered view……..maybe theres an alternative way of doing thos or one option is to make the compilation of the kernel multi threaded….as a way to aspeed up the compilation of the kernel

psss cant w8for the end result

pssssmaybe in the ttutorials u show an overview of the differant types of bones uzed thatwiked robot

Wonderful web site. Plenty of helpful information here. I’m sending it to a few pals ans also sharing in delicious. And certainly, thanks in your effort!

Very impressed with project mango thus far. If I may, I do just have a quick question or two about the render farm.

What software are you using to power the renderfarm?

I was also wondering if blenders integrated network render feature is still being worked on and if you think it’ll ever be at tad more friendly? (dumbed down so someone like me can just install multiple copies on a renderfarm and hit “network render” on one pc and have it done).