Yesterday we had the chance to test the Sony F65 at Camalot (who were very nice and helpful). It was very exciting to see such an enormous camera in the hands of our DP. But even though the whole process is digital all the way, getting hold of the data is not as easy as you might think (see yesterday’s post).

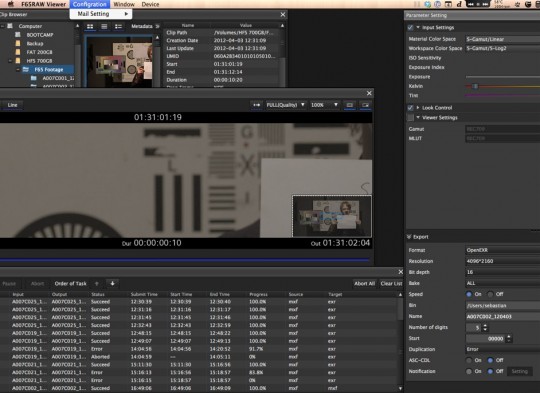

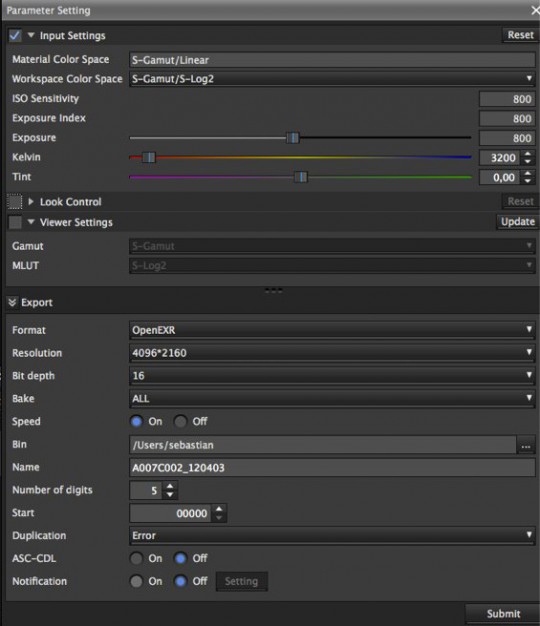

So, after getting the data out of the camera, safely transferring it to the Blender Institute and plugging it into the computer, the question is: Now what? We have been shooting in Sony F65 Raw format (.mxf), which generates enormous files. 10 seconds footage are 2.68GB of raw data, unreadable unless you install the Sony F65 viewer app (of course only possible after the annoying registration of all your data!) (Plus a not very user friendly interface. But hey, you can even write emails with it!).

From this app, for which I wasn’t able to find any documentation, you can export to EXR, DPX and MXF (again, this would not be readable for FOSS). The DPX are also for some reason un-readable, so we go to openEXR, as planned. (Just in case someone is interested in that, here’s a patch for DPX. http://projects.blender.org/tracker/?group_id=9&atid=127&func=detail&aid=27397. Any developer willing to have a look into it?)

But how and what shall we export? S-Log? Linear?

The thing is, in any case there still is quite a bit of noise. Apparently Sony suggests to do some heavy pre-processing

Just as Bayer pattern RAW files need to be de-Bayered to generate viewable RGB images, F65 RAW files need to be developed. While the structure of the F65 RAW file is completely determined, the development process allows for considerable freedom and creativity. Post houses and software vendors are free to pursue everything from quick and simple single-channel interpolation to edge-directed interpolation, constant-hue based interpolation, Laplacian second-order gradient enhancement and even high-frequency reconstruction. This means you can match the de-mosaicking to your budget, your time constraints and your artistic ambitions.

So for anyone who wants to investigate, here’s something for you to play with:

– One DPX file: A007C025_120403.dpx

– 1.3GB clip, an .mxf directly out of the black box from Sony: A007C025_120403.zip

– 2.5GB exr files, generated from the same clip, generated with the following settings:

>>”But hey, you can even write emails with it!”

o_O Omg. Are you serious? O_o

well, i once did my math homework in python which is bundled with Blender :D

This reminds me of Zawinski’s Law:

“Every program attempts to expand until it can read mail. Those programs which cannot so expand are replaced by ones which can.”

Those programmers MUST be real pros.

>>”Those programmers MUST be real pros.”

Or may be, there is other explanation… https://xkcd.com/554/ ;)

the red epic would be a better choice i would think i have used there free redcinex program and messed around with converting 5k red files and it works pretty easy

Red Epic is still a possible choice. But note that we get this for free; so we can help the sponsor testing things well and give them useful experience & reference footage.

Hey,

Davinci Resolve (http://blackmagic-design.com/products/davinciresolve) should afaik get Sony F65 Support soon. Maybe helpful for you cause there is a free version of it.

Btw.: From what you read the Sony is technically much better than the Red and should be delivered with beta software ;-)

“This means you can match the de-mosaicking to your budget”

Does that mean you pay fees to use de-mosaicking algorithms?

More likely the time it takes to convert and the amount of tries to make it perfect. Converting these amounts of footage on the heaviest but best algorithm is likely going to be very slow.

… since probably a company is paying me for F65 import, I’ll take a look and see what I can do.

Cheers,

Peter

Awesome! Keep me updated :)

The plus side of this would be that Blender does NOT need all of these complex processing tools as high-end camera systems have their own applications to pre-process, and manage data.

With out direct support from the camera manufacturers I believe this would be an incredibly hard, and labor intensive task anyway’s.

Moving onto Blender though, using S-log, Linear etcetera, how will this work with Blender, doesn’t it simply convert everything to Linear 32-bit float that moment – Is there much progress on the colour pipeline aspect of Blender (I read a while back that this was something that needs more development).

RED :

RED cameras, while being great, are not the be-all-end-all of camera technology, no doubt the guys would still have major problems with converting, pre-processing, and exporting of so much data.

>> The plus side of this would be that Blender does NOT need all of

>> these complex processing tools as high-end camera systems have

>> their own applications to pre-process, and manage data.

This was exactly my thought. I was also wondering why the title of the post is “Format Woes”? I don’t have experience working with these high-end camera systems, but given the amount and type of data involved isn’t this sort of system specific workflow pretty much to be expected?

Reading the post also made me think of the opposite end of the production pipeline, encoding a final audio-video file. This is another area where many (most?) users seem to punt and use a third-party, often commercial tool, because of Blender’s deficiencies.

Master in OpenEXR. Convert to tiff with proper color management and output a DCP for theatrical release. Use FFmpeg to encode to: ProRes 4444, DNxHD, H264. Where’s the problem at?

I don’t see a reason to export in S-log, as compositing happens linear anyway. S-log is only useful for limited bit-depth formats like 10 bit DPX.

Btw, did you try to run the Windows version of the Sony software under Wine in Linux?

Log gamma curves are also useful for integer based file formats (which DPX is) to get more dynamic range.

OpenEXR, as a floating point format, easily supports much higher precision valyes as well as overbright ones (ie. >1) which normally a log curve compresses into that 0-1 range.

what is wrong with the dpx file? Blender can’t open it? tested with 2.62 and 2.63RC on OSX 64bit

Looking at the dpx, noise is really really not bad, looks a-ok to me :), you want noise, noise is good

16bit is like really nice, but that’s also the cause of the large file sizes. That wont play very nice when using them for tracking/matchmoving. Best bet is to go 12bit max, or 10bit, you dont really need all that depth when for example, use a sensible amount of color correction and even then, if you know what your doing, you have to be pushing pretty hard to run into bit-depth limits. 10bit is 4 times 8bit, depth wise, and 16bit is 64 times 8bit…

+1 for noise

bit depth can be very useful to track features in low or high light environment

But computers don’t work on 10bit and other odd bit depth numbers easily.You will always end up scaling it to a 16 bit buffer anyway and having to correct for that. Why throw away data? If you want to track and matchmove at 4k and 16 bits you’ll need a better computer and disk storage array than Blender’s “Runs on 5 year old hardware” rule.

Did some more testing on the dpx…. you’d be amazed at how far you can push that pretty 16bit file of yours :)

Thank you for the DPX sample image ! I did some testing with it with my patch. The file won’t load correctly, but found the bug.

Will post an updated patch once it’s fixed.

Patch updated, but the picture is really dark. What were the settings used to export it to DPX ?

I guess linear on which automatically a 2.2 gamma curve is applied.

Try the OCIO features to get a viewable image in sRGB.

Mmmm, the header says it’s log encoded.

But I think I’ve understood. There are 2 different log colorspace for DPX file. So far my code has been using the same log -> linear LUT for both, but it’s obviously wrong.

Will try to find the correct LUT for this one.

More proof that colour management must be handled outside the image loading code in Blender.

I guess there must be some compression out of the camera (so you can record the large data rate), hence the need for post decompression. That decompression will always be proprietry due to the cameras used. But what about external recorders can you get a different signal to them that is more open in process? Or do you loose to much color space?

That would be HD-SDI then, so no 4K.

Even though this camera system is a bit clumsy, it’s still way faster than getting a film negative developed and scanned. :P

Just figure it out, it will be worth 10 times the effort!

Wow! All you guys are talking WAY over my head again! ;)

Just a question about the white balance. Is it possible to compensate for it without loss while in the OpenEXR float format, or is it important to set it during the import procedure? I do photography but I do not know yet much about white-balancing algorithms (I assume it’s pretty much non-destructive given enough bits of information). If it was for me, I would set it to something standard like 5500K or 6500K, and do the correction/grading within blender (devs are working on that, right?;)).

You can set white balance during shooting for purposes of monitoring on set and as metadata for post production. (which you can use or ignore at your pleasure)

Can versions of these files just a few frames long be made available? That would make playing with them a lot easier.

I hate it when cameras use proprietary file formats, and then require ridiculous extra software to handle it.

“Oh, our cameras only make .tard files, and you require the 800MB SuperShare MegaSuite to open them. It has a cluttered user interface and constantly runs in the background, but it has extremely useful features such as sending your images via morse code to a phone or embedding .wmv files in the pictures! Oh, and you need to register it or the camera won’t work.”

As I understand it, S-Log is a gamma function that enables the camera director to handle the camera as if it were a real movie/film camera and lighting the scene with analog light meters. But as I see on your second picture the material color space is already linear (S-Gamut/Linear), at the top of the interface picture.

The second decision is where and in what working color space the final color grading will take place, (second option from above). I suppose that will be in the 16 bit linear color space within Blender, after or while compositing with the CGI footage.

In short, no need to develop the raw image yet, using a color grading system or via LUT (Color lookup tables). Just change the workspace color space menu button in the viewer interface to linear and go exporting!

Slog on the Camera is useless. It’s the old Slog from the F35; which is made for an sensor with 1000% Dynamic Range. The F65 will get a firmware update to give it Slog-2, which has the 1300% Dynamic Range that you need to view Log correctly. Remember though that Log on the F65 is ONLY for HD recording; SStP. When shooting raw, you are shooting Linear, not LOG.

The F65RAWPLAYER is pretty useless, but if you’ve used redcine-x it should be very straight forward.

Davinci Resolve will open the F65 raw files in Beta 8.2 (free) but the files will not look great just yet. Resolve is still using an older sony SDK for processing the files, once they update, your footage will look a lot better hopefully.

If you can get your footage into Colorfront, then you will see the best results. Colorfront has been releasing new builds for the F65 almost daily and they are getting better results with F65 footage than the Sony released SDK!!

I agree that downloading the footage is very complicated and at first it’s daunting…I’ve practiced a few times and I can get the footage onto my computer safely without referring to the instructions. When you shoot on the F65, treat it like DIGITAL IMAX…the files are huge. No other camera out there records such a rich image. Those 16 bits of information/pixel really shine when you try to push the image around in post.

Now, bear in mind that the AMPTS is working on making ACES a standard very soon. Things will change once again, but hopefully for good. The only program that can show you F65 footage looking correctly in the ACES colorspace is Colorfront.

Next time it might be cool to go for as much open source hardware as possible. I’m not sure if this (http://apertus.org/) is fully usable yet, but it certainly deserves to be looked at. Sorry for commenting on an old post, I just wanted to keep it on topic.